Last week Engine Yard announced the winners of their inaugural Engine Yard Innovators Awards.

I was both honored and surprised when I learned that I had been chosen as the Engine Yard Innovator in the DevOps category.

“Our DevOps category winner is Nathen Harvey. A devops guru who has been travelling from conference to conference evangelizing the use of Chef, Nathen is known for his “Rails With Chef” proficiency. He works to help others understand the importance of backend compatibility. ”

I was asked to write a post about some my work. This seemed like a good time to reflect on some of the work I’ve been doing for the past few years.

In late 2009, I joined CustomInk to head up the Web Operations team. While there, I was able to help drive the adoption of many DevOps practices including automation, continuous delivery, collaboration across teams, expanded responsibility, and participation in open source communities.

At CustomInk, we transformed our infrastructure from one that was primarily hand-crafted, static, and managed by a few people to one that is flexible, automatically provisioned, and managed by many. We changed the way we deployed software going from two deploys a month to multiple deploys each day. Developers were no longer stuck waiting for the Operations team to deploy code; the Operations team no longer played the role of “merge monkey.” We redefined what it meant for a developer to be “done” with a bit of functionality: no longer was a commit to the master branch sufficient, the functionality wasn’t “done” until it was in production.

What were the results of these changes? We increased the number of deploys while dramatically reducing the number of rollbacks. We reduced the amount of time it took features to go from planning to production with a goal of minimizing the amount of work in progress at any given time. Developers and Operations collaborated on more projects each helping the other improve their skills, techniques, and processes. Our addiction to automation allowed us to deliver more value to our customers faster than ever before.

Many things enabled us to make such dramatic changes in our organization. One of the most important catalysts for these changes was the things we were learning from others in the technical community. We took ideas from other companies like Etsy and modified them to fit our needs. We learned about new tools and techniques from blog posts, podcasts, and conferences. We took time to learn and expirement with new tools, technologies, and techniques. We felt it was important to give back to the community.

I helped launch EngineerInk, the CustomInk Technology Blog and @custominktech, the twitter account for sharing CustomInk’s technology news. Additionally, I co-founded the Washington DC MongoDB Users Group and DevOpsDC. Both of these groups meet regularly to exchange ideas, share knowledge, and network. We are also known to enjoy a craft beer or two at each meeting.

I’ve been lucky enough to share some of our successes at various conferences including RailsConf.

The transformation, sharing, and success we enjoyed at CustomInk would not have been possible were it not for the attitude, dedication, and drive of the people involved. When it comes to technology though, the one tool that most directly enabled these changes is Chef, the configuration management framework from Opscode. I’ve written about our decision to use Chef and quite a few other articles about Chef on EngineerInk. Sure, Chef is a great and very powerful tool but there’s really a lot more to Chef than a bunch of Ruby code.

When I first started working with Chef, it reminded me of Rails when I first stated using it back in 2007. Here was a framework built around a strong, vibrant, and welcoming community. There were lots of building blocks (cookbooks, knife plugins, etc.) being developed and shared. Chef, like Ruby, felt dedicated to developer happiness. The best days at the office were those where I spent the majority of my time automating with Chef. In early 2012, I joined Bryan Berry on his Food Fight Show podcast to share news of newly published Chef Cookbooks - the “What’s Cookin’” report. I’ve since joined the show as a co-host and have been fortunate enough to interview some of the thought leaders in the DevOps movement. I’ve also had the opportunity to speak about Chef at a number of conferences including RubyNation, MongoDB Conferences, and #ChefConf.

In August, 2012, I left CustomInk to work as a Technical Community Manager at Opscode. This allows me to devote even more time evangelizing Chef and helping people learn Chef.

DevOps is not about tool choices and Chef isn’t required to adopt DevOps practices in your organization. However, DevOps does require passionate individuals who are excited to tackle tough challenges and enjoy working with technology, their colleagues, and the larger technical and business communities. For me, Chef is a tool and a community that make me happy, keep me passionate about the work I’m doing, and encourage me to share with others.

I would like to thank the entire team at Engine Yard for their continued support of the Ruby, PHP, Open Source, and DevOps communities. I truly am humbled to be selected as the DevOps Innovator.

]]>

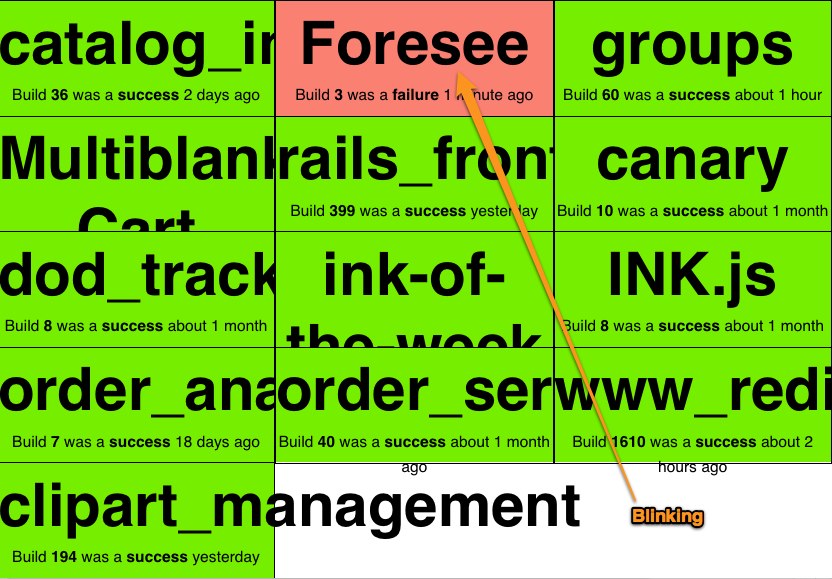

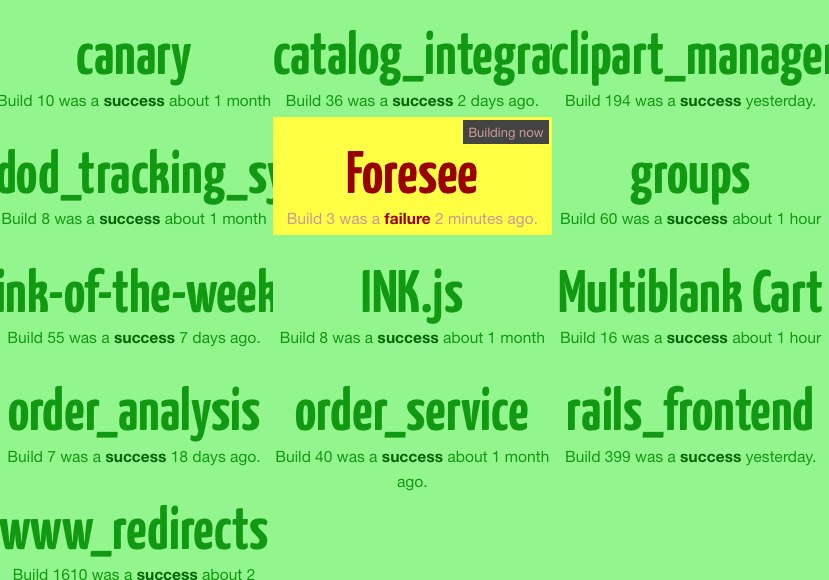

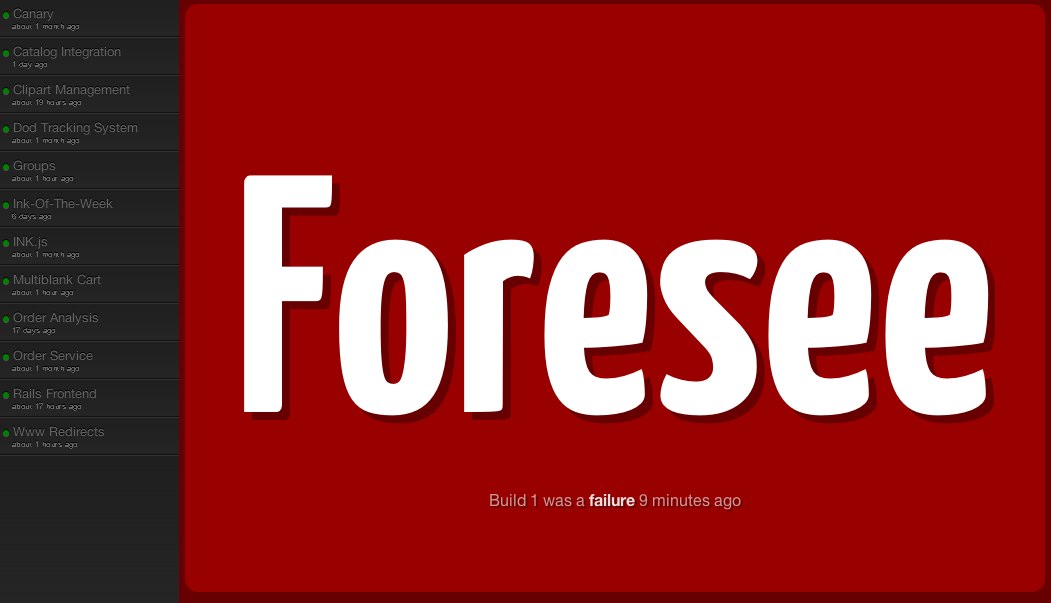

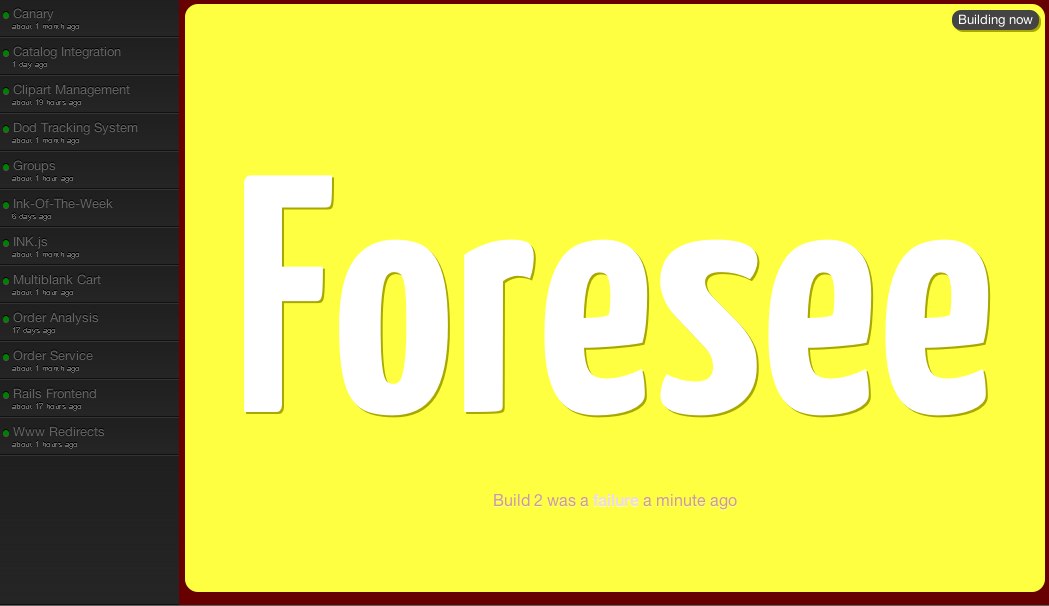

We use Green Screen at CustomInk to look after our continuous integration servers, currently 3 Hudson servers and one Jenkins cluster. We have a monitor mounted in the engineering office that makes it easy for everyone to quickly assess the build status.

We use Green Screen at CustomInk to look after our continuous integration servers, currently 3 Hudson servers and one Jenkins cluster. We have a monitor mounted in the engineering office that makes it easy for everyone to quickly assess the build status.

We’ve also added support for controlling which builds are displayed from each CI server. So that you can explicitly include or exclude builds or just go with the default behavior of showing all builds on the server.

We’ve also added support for controlling which builds are displayed from each CI server. So that you can explicitly include or exclude builds or just go with the default behavior of showing all builds on the server.

I’ve been to a few other similar MongoDB events in NYC and DC but this was the largest by far. There were over 1,000 attendees and 5 tracks plus whiteboard and birds of a feather sessions.

I’ve been to a few other similar MongoDB events in NYC and DC but this was the largest by far. There were over 1,000 attendees and 5 tracks plus whiteboard and birds of a feather sessions.